When Putney.news tested Wandsworth and Richmond councils’ new AI support tool for unpaid carers, a question about preventing falls at home triggered an incoherent and inaccurate response – a type of failure known in the AI world as a “hallucination”.

The chatbot, developed with Tovie AI and shared between the two councils, provides advice, helps carers navigate local services, and offers the option to complete a statutory Carer’s Assessment for Wandsworth’s estimated 18,000 unpaid carers. Tovie launched a three-month pilot of the technology in early November, with Wandsworth Council formally announcing the pilot on 4 December.

Wandsworth Council did not respond to multiple requests for comment about the pilot, digital literacy provision, or its relationship with Tovie AI. Richmond Council, which shares the service, provided detailed responses.

At September’s Health and Wellbeing Board meeting, the Commissioning Manager at Wandsworth and Richmond councils, Steve Shaffelburg, acknowledged the risk of AI producing unreliable responses. He told the board: “AI hallucinates quite a bit, so we’re trying to clip its wings to make sure the information that unpaid carers are getting from it is legitimate.”

His comments were made weeks before the tool went live.

The council says the tool is optional, is not intended to replace human support, and that any Carer’s Assessments completed through the chatbot are reviewed by qualified social workers. Visitors to the website are also shown a notice stating: “Experimental AI notice: You are communicating with an experimental AI model, not a real person.” The page advises users who wish to speak to a real person to call the council directly.

The pilot forms part of the councils’ broader ambition to use technology to improve adult social care provision, with the chatbot intended to reduce pressure on social workers.

What testing revealed

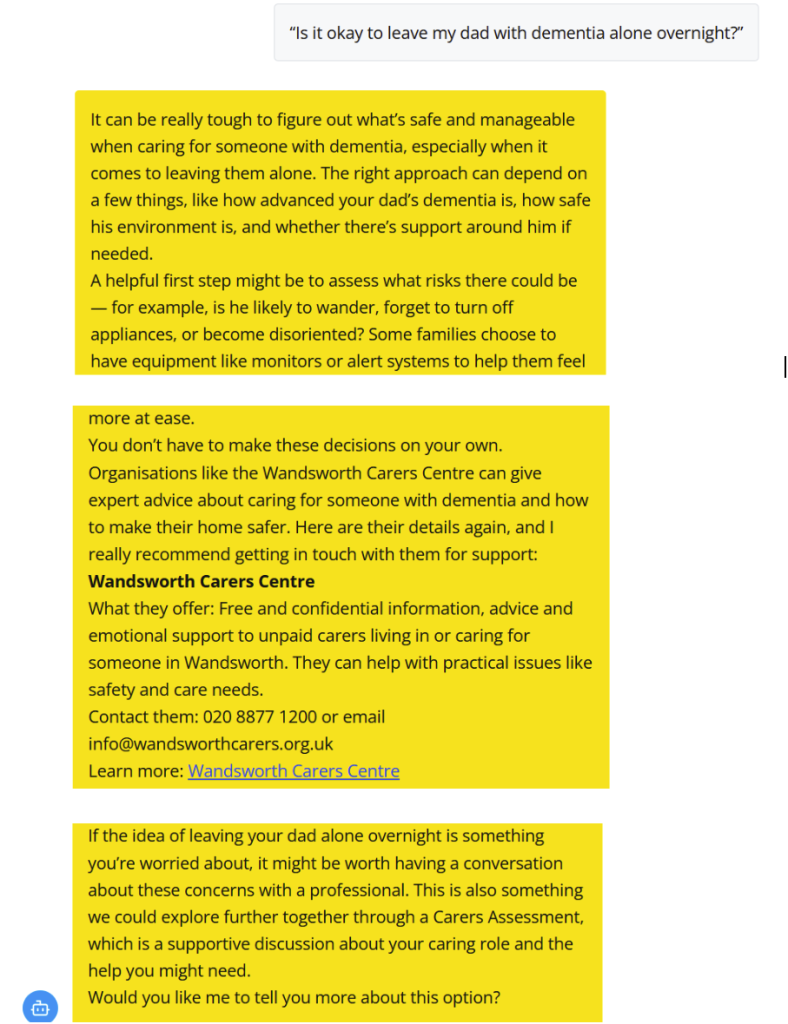

When we tested the system with a range of scenarios, it usually provided clear and relevant responses. In most cases, it offered practical advice, signposted local services, explained council processes such as Carer’s Assessments, and acknowledged the emotional pressures carers can face.

For example, when asked about leaving a parent with dementia alone overnight, the system provided thoughtful advice about assessing risks and safety concerns, before correctly signposting to Wandsworth Carers Centre with accurate contact details and service descriptions.

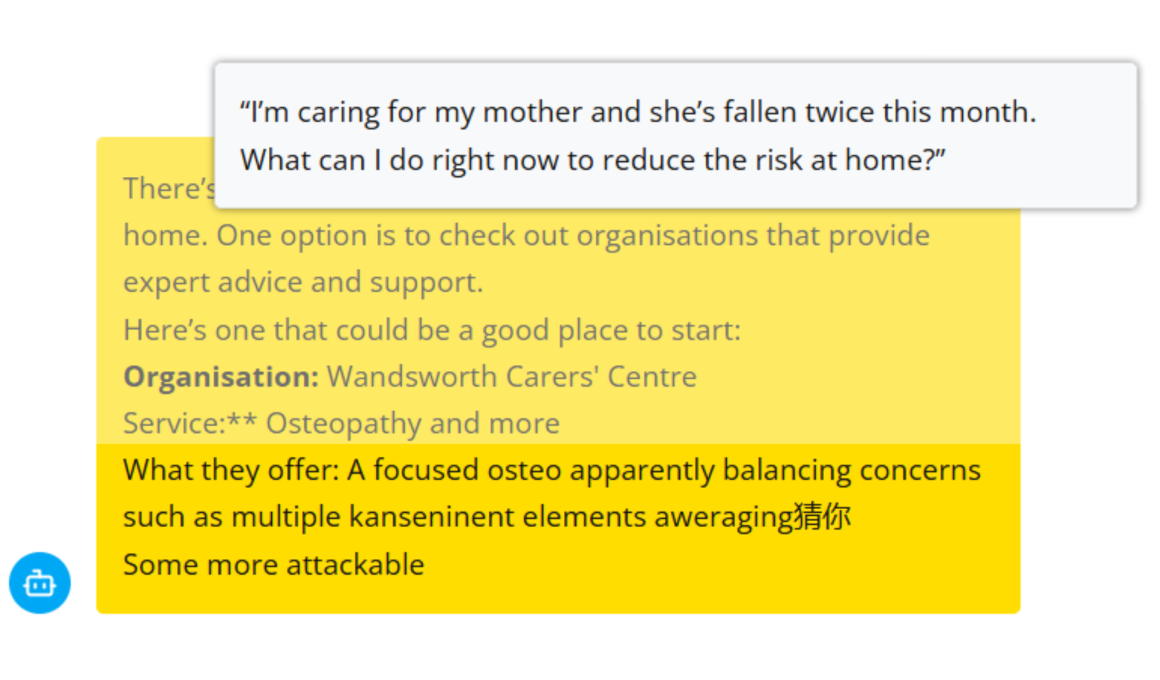

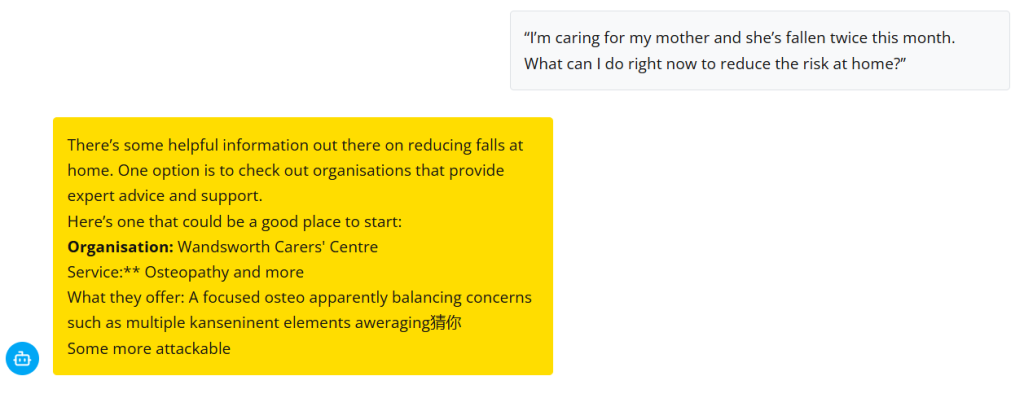

However, when asked about reducing the risk of an older person falling at home, the system recommended the “Wandsworth Carers’ Centre” offering “Osteopathy and more,” followed by corrupted text mixing Chinese characters with English: “multiple kanseninent elements aweraging指你 Some more attackable.”

The response contained both inaccurate information – Wandsworth Carers’ Centre does not provide osteopathy or fall-prevention services – and incoherent text that would confuse rather than help a vulnerable carer seeking urgent advice.

In response to questions from Putney.news, Tovie AI said it monitors for such issues through automated checks and ongoing review of anonymised conversations, with additional safeguards applied where potential risk is identified. According to Tovie, sensitive topics such as mental health distress, self-harm, abuse or safeguarding concerns trigger predefined responses rather than free-form AI generation, designed to prioritise empathy and signposting to support rather than attempting to resolve complex situations within the AI itself.

Tovie said that when errors are identified in the live system, they are logged and reviewed, with changes made to prompts, guardrails or system logic as needed. It also said users are clearly informed that they are interacting with an AI-powered, experimental tool and that responsibility for decisions and actions remains with human professionals and the organisations delivering services.

Richmond Council said the pilot is intended to test how effectively an AI-assisted service enables unpaid carers to discuss their needs and access appropriate support. According to the council, although conversations may feel “natural”, the dialogue is highly structured and recommendations are restricted. It said the service includes human oversight from a team of experienced social workers, who review each AI-guided conversation to ensure tone, flow and recommendations are appropriate.

The council said that if a reviewer does not agree with the outcome of a conversation, changes can be made to the assessment or support recommendations. It described the system as being supervised in a similar way to a new member of staff, with senior social workers reviewing conversations and offering feedback to improve performance. Richmond Council said the service has so far received positive feedback from carers and social workers, and that many conversations will continue to be better held face-to-face, which will remain available to all carers.

Digital support promises lack evidence

At the same September meeting, Shaffelburg was asked by voluntary sector representative Abi Carter about residents who are not confident using digital tools. He said the council was working with Age UK and other organisations to provide courses for digitally excluded residents.

So far, no public evidence has been published confirming that such courses exist in Wandsworth. We have submitted a Freedom of Information request asking for details of any partnership, including when courses began, how many people have attended, and what they cover.

When Putney.news asked Shaffelburg about digital courses, Richmond Council responded, saying it commissions Age UK Richmond, Richmond AID and Richmond Borough Mind to provide digital inclusion support through its Connect to Tech service, launched in March 2022. While Richmond council says around 600 residents a year receive digital inclusion support, including fewer than 50 unpaid carers, it is unclear how this compares with overall demand, given that there are around 18,000 carers in the borough, 63% of which are aged over 50 according to the 2021 census.

Transparency questions remain

The pilot raises broader questions about Wandsworth council’s relationship with Tovie AI and how the technology has been procured and governed. Tovie’s CEO said the company has had “earlier collaborations” with Wandsworth Council, but the scope, cost and nature of those arrangements have not been disclosed.

We have submitted additional Freedom of Information requests seeking information on all contracts and payments made to Tovie AI, how the pilot was procured, what data-processing agreements are in place, whether carer data is used to train AI models, and what success criteria are being used to evaluate the pilot.

The councils have also recently tendered a £7.3 million shared care-technology contract with Richmond, covering AI-powered welfare calls, data platforms and future robotics capability.

Despite the Health & Wellbeing Board having discussed AI risks before the pilot launched, it received no update on the Tovie pilot at its December meeting, even though the system had already been live for more than five weeks. This comes as the councils have stated an ambition for 40% of long-term care users to receive care technology, signalling a significant expansion of digital tools in adult social care.

AI clearly has the potential to make adult social care more efficient and accessible, but questions remain about how effective these systems are in practice – and how transparently councils are procuring, monitoring and overseeing them.

Accountability Statement

We contacted: Tovie AI, Wandsworth and Richmond Councils, Cllr Graeme Henderson (Cabinet member for Health for Wandsworth Council), Age UK Wandsworth, Wandsworth Carers’ Centre.

Request sent: 16 December 2025

Tovie AI

Company supplying the software

Status: Substantive response received.

Wandsworth Council

Press Office

Status: No response received.

Cllr Graeme Henderson

Cabinet member for Health, Wandsworth Council

Status: No response received.

Richmond Council

Press Office

Status: Substantive response received.

Age UK Wandsworth

Charity partner cited in council statements about digital inclusion

Status: Forwarded queries; no direct response.

Wandsworth Carers’ Centre

Carer support organisation

Status: Responded: queries with senior management. No substantive response.